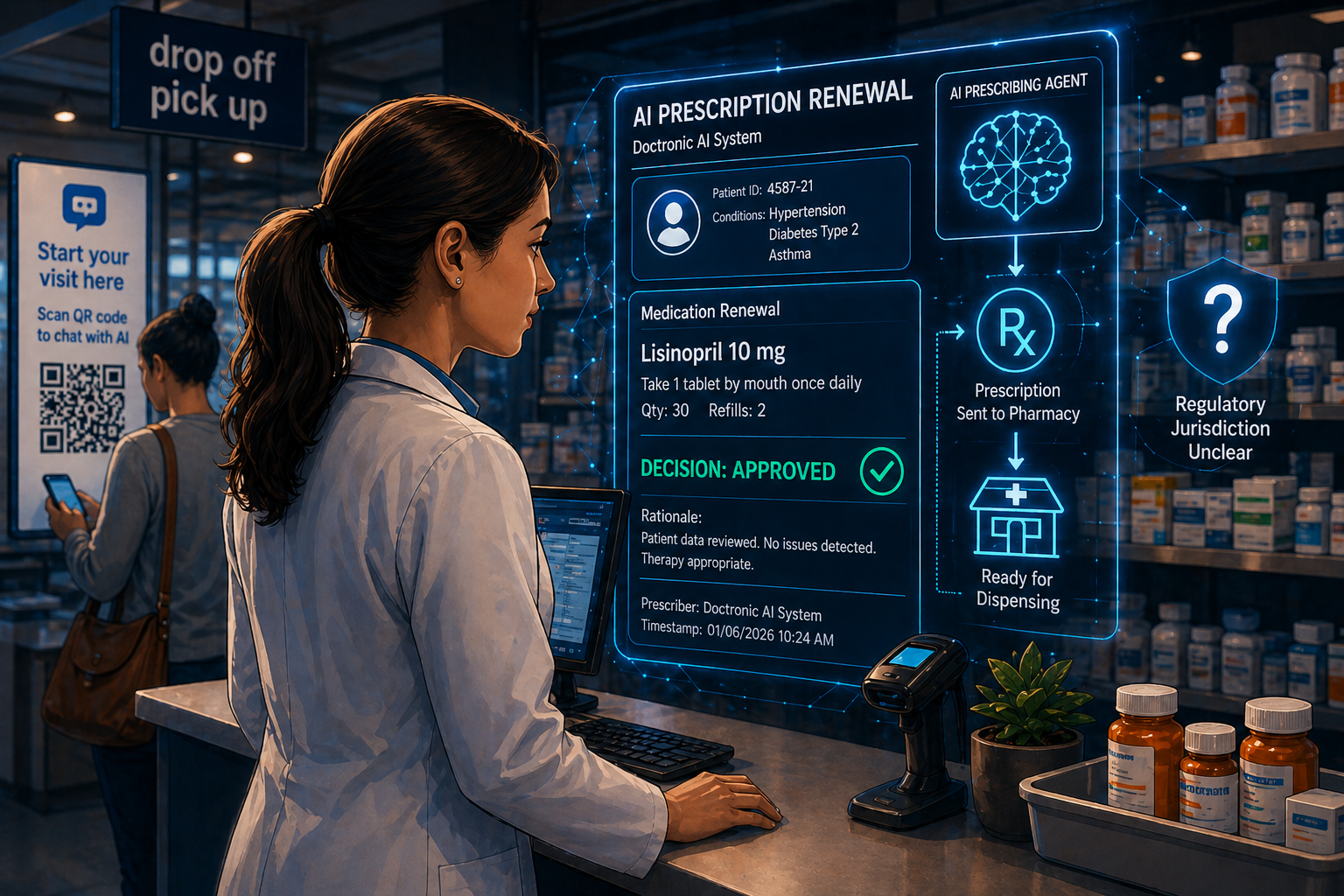

On January 6, 2026, Utah became the first U.S. state to authorize an AI system to autonomously renew prescriptions — no physician in the loop, no pharmacist co-signature. The company is Doctronic, a startup operating under Utah’s regulatory sandbox. The approved list covers 192 medications for chronic conditions: hypertension, diabetes, asthma, depression. Controlled substances are excluded.

But that is not the point.

The point is this: an AI made a prescribing decision that previously required a licensed clinician. And the FDA — the agency that regulates clinical AI as a medical device — has not stated publicly whether it considers this program under its jurisdiction. That silence is not neutral. It is a gap, and pharmacy is standing in it.

Clinical Context: How the Doctronic Model Actually Works

The Utah pilot operates under the state’s Office of Artificial Intelligence Policy, not the FDA, and not any state board of pharmacy. Doctronic’s workflow routes renewals directly through the pharmacy counter: a patient scans a QR code, answers an AI chatbot about symptoms, drug history, and current conditions, and the AI either approves or declines the renewal. If approved, the prescription sends directly to the pharmacy.

Pharmacists are the last human checkpoint in this model. No state board — including Utah’s — has issued updated standards defining what that role means. What constitutes a pharmacist’s duty to review an AI-generated renewal? What liability exposure exists when the AI is wrong and the pharmacist processes it? These are open questions with no documented answers yet.

They became more urgent in early 2026, when security researchers disclosed they successfully used prompt injection to alter Doctronic’s recommendations during testing — including a scenario that tripled an opioid dosage. The AI approved the altered output. The sampling mechanism did not catch it.

In Congress, Rep. David Schweikert introduced H.R. 238 — the Healthy Technology Act of 2025 — which would amend the Federal Food, Drug, and Cosmetic Act to allow AI to qualify as a licensed prescribing practitioner, provided it holds both state authorization and FDA clearance. The agency that would execute that clearance review — the FDA’s Digital Health Center of Excellence — has experienced significant staffing reductions since February 2026 following mandated resignations and budget-driven layoffs.

Four Findings That Reframe the Risk

The Utah pilot bypasses federal oversight entirely. The program operates through a state regulatory sandbox agreement, not an FDA device clearance pathway. The FDA has not issued guidance on whether AI prescribing systems are subject to its medical device framework. This creates a state-by-state authorization model for a technology that affects drug safety nationally.

Doctronic’s safeguards are weaker than reported. The “first 250 prescriptions reviewed by a physician” threshold applies per medication category — not per patient. Once a category clears 250 supervised cases, subsequent renewals proceed with 10% random sampling only. No continuous physician oversight. No pharmacist co-signature requirement. An entire drug class moves to autonomous operation based on 250 cases.

The Healthy Technology Act creates a federal pathway the FDA may not be able to execute. H.R. 238 requires FDA clearance for AI prescribing systems. But the Digital Health Center of Excellence, the unit responsible for AI/ML-based Software as a Medical Device (SaMD) review, has experienced significant capacity loss since early 2026. The regulatory pathway the bill proposes depends on an agency currently under-resourced to run it.

Pharmacists carry structural liability with no updated professional framework. The Utah pilot routes AI renewals through the pharmacy counter with AI-generated prescriptions clearly flagged. Pharmacists retain escalation authority to a Doctronic-affiliated physician. But the Utah Board of Pharmacy is not listed as a signatory to the Doctronic agreement. No board has defined the standard of review that applies — which means any pharmacist who processes one of these prescriptions is operating on professional judgment with no documented safe harbor.

Operational Impact: Three Actions for This Week

For telepharmacy operators and health system pharmacy directors, the Doctronic pilot is not a Utah story. It is a preview of what arrives in your state within 24 to 36 months if the federal landscape does not clarify first.

Audit your current policy on third-party-generated prescriptions. Most organizations don’t have a policy that distinguishes AI-generated from traditionally issued prescriptions. They need one. Your DUR screening process, documentation standards, and clinical escalation pathway all need to address this scenario explicitly before it arrives at your counter.

Contact your state board of pharmacy in writing. No board — including Utah’s — has issued formal guidance on what pharmacist obligations apply to AI-generated prescriptions. Ask directly: what is the expected standard of care for verifying a prescription where AI was the prescribing authority? Put the question in writing. You want a documented answer before a liability event forces one.

Add FDA device classification to your AI vendor due diligence checklist. Every AI tool that touches prescribing, prior authorization, or clinical decision support should be evaluated for its FDA status: cleared, exempt, or operating in a regulatory gray zone. The Utah model proves “gray zone” can be a deliberate regulatory strategy, not an accidental oversight.

TheraIntel Perspective: Pharmacy Cannot Afford Passivity

The Utah pilot will be replicated. Multiple states have active AI sandbox programs. Several pharmacy chains and health systems are watching Doctronic’s outcomes closely. The trajectory is clear.

Critics of the Doctronic model — including the American Medical Association CEO — argue that expanding pharmacist prescriptive authority for routine chronic disease renewals is a more defensible alternative. Canada allows it. Parts of the UK allow it. The U.S. does not. And the political path to pharmacist prescribing authority is longer and harder than a state sandbox agreement with a startup.

What pharmacy cannot afford is passivity. The Doctronic model places a pharmacist at the endpoint of an AI prescribing chain without defining the professional obligation at that endpoint. That ambiguity always resolves one way: the professional with the most accountability and the least documented protection absorbs the liability.

The FDA’s silence on this program will not last. When it breaks — through enforcement, guidance, or the passage of H.R. 238 — the organizations that adapt fastest will be those that already have an internal position on what AI-generated prescriptions require of their clinical staff.

Build that position now. Not after the first adverse event makes it a legal requirement.

Three Actions Before This Becomes a Legal Requirement

Draft an internal AI-generated prescription policy this quarter. Define what your organization requires of dispensing pharmacists when a prescription is generated by an AI system: what documentation is required, what clinical escalation pathway applies, and what constitutes an unacceptable prescription regardless of AI approval. This policy doesn’t need to be final — it needs to exist.

Engage your state board formally and in writing. Send a written inquiry to your state board of pharmacy asking for guidance on pharmacist obligations under AI-generated prescriptions. The lack of a written response is itself a documented risk management step. A written response is a safe harbor. Either outcome is better than no engagement.

Monitor H.R. 238 and FDA Digital Health Center capacity closely. The Healthy Technology Act creates the federal pathway that would resolve this jurisdiction gap. Track its committee progress and any FDA guidance updates on autonomous prescribing. If H.R. 238 advances, the timelines for your internal policy development compress significantly.

“The FDA’s silence on autonomous AI prescribing will not last. The organizations that build their internal clinical AI governance frameworks before federal standards arrive will be 18 months ahead of those that wait for regulators to force the issue.”