On April 2, 2026, the FDA issued a warning letter to Purolea Cosmetics Lab in Livonia, Michigan — the first enforcement action in FDA history to name “Inappropriate Use of Artificial Intelligence” as a standalone cGMP deficiency. This wasn’t a theoretical risk document. It was a citation.

The firm had used AI agents to generate drug product specifications, standard operating procedures, and master production records. None of the outputs were independently reviewed before use. When investigators asked why process validation had never been conducted prior to product distribution, the firm’s response was direct and damning: the AI never told them it was required.

“The AI never told us” is now FDA-documented evidence of exactly what regulators have feared: AI deployed as a compliance substitute rather than a compliance aid.

For pharmacy directors, health system VPs, and clinical operations leaders who have integrated AI tools into clinical or operational workflows, this warning letter is not a manufacturing story. It is a preview of where FDA and state boards of pharmacy are heading. The regulatory framework is no longer ambiguous. It just got a citation category.

Clinical Context: The Governance Gap Is the Exposure

The Purolea letter arrives at a moment when AI deployment in healthcare has far outpaced governance. Three in four health systems now report deploying at least one AI solution — a 27% jump in a single year. Only 18% have formal AI governance structures in place. That gap between deployment and accountability is precisely where enforcement risk lives.

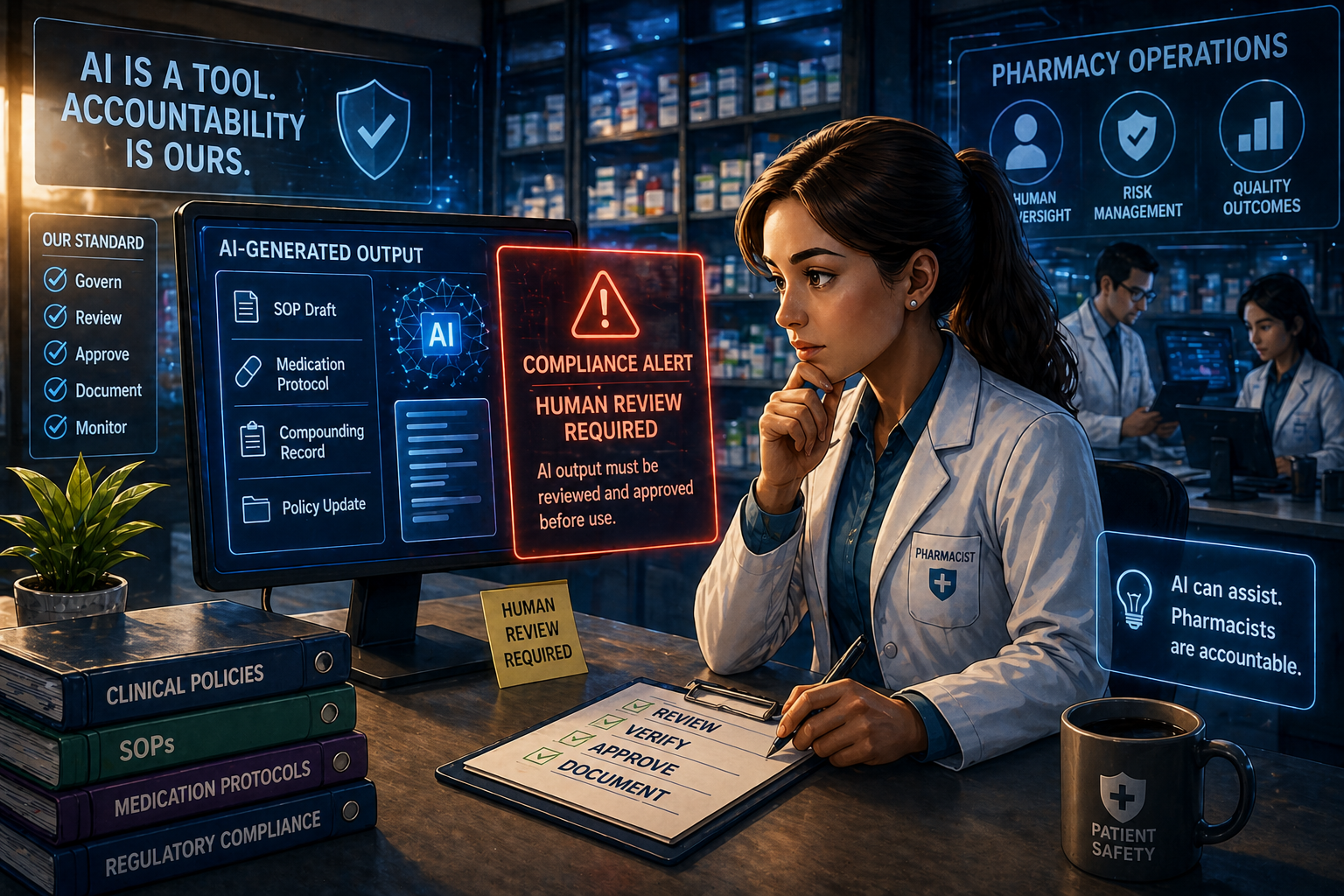

In pharmacy operations, AI tools are now embedded across medication reconciliation, clinical decision support, prior authorization processing, compounding documentation, and formulary management. Most of these implementations share the same failure pattern the FDA just cited: AI outputs feeding into clinical or compliance workflows without a defined, documented human review step.

The FDA’s action under 21 CFR 211.22(c) — which requires the quality unit to review and approve procedures — makes clear that this obligation does not disappear because a machine generated the content. Regulated entities own the output. That principle extends directly to health system pharmacy.

The UK’s General Pharmaceutical Council made the same position explicit on April 22, 2026, in its first formal AI position statement: pharmacy professionals remain “personally accountable for their professional practice, even when AI is being used.” The standard is not weakened by automation. It follows the pharmacist.

Four Findings That Define the New Enforcement Standard

AI-generated compliance documentation without human review is a cGMP violation, not a gray area. The FDA cited Purolea specifically for failing to ensure AI-generated documents were “accurate and actually compliant” with cGMP before use. The language in the warning letter is unambiguous: using AI in document creation requires the firm to review those documents. This applies directly to health systems using AI to generate medication protocols, policy updates, or SOP drafts without pharmacist sign-off.

“The AI didn’t tell us” is not a defensible compliance position. The most operationally significant finding: the firm was unaware of a process validation requirement because its AI agent had never flagged it. The FDA made clear that ignorance of regulatory requirements — regardless of the tool used to identify them — is not a mitigating factor. Regulatory obligation belongs to the regulated entity, not the software vendor.

FDA expects documented human-in-the-loop review for all AI-assisted regulated activity. The warning letter establishes that any AI-generated output used in a GMP context must be “reviewed and approved by an authorized human representative of the quality unit.” In health systems, this translates directly: if your team uses AI to draft clinical policies, review drug protocols, or generate formulary documentation, a pharmacist must review and approve that output — with documentation on record.

Regulatory scrutiny of AI in pharmacy is accelerating on both sides of the Atlantic simultaneously. Within 20 days of the FDA’s warning letter, the GPhC published a position requiring pharmacy owners to conduct due diligence on AI tools, maintain governance arrangements including risk assessments and data security policies, and monitor AI tools as part of routine quality management. These are not recommendations — they are the standards against which pharmacy professionals will be evaluated at inspection.

Operational Impact: Three Concrete Obligations

Health system pharmacy departments and telepharmacy operators need to treat this FDA warning letter as a line of demarcation. Before April 2, 2026, the regulatory status of AI in pharmacy workflows was largely undefined. After it, there is FDA-documented precedent: deploying AI without adequate human oversight in a regulated healthcare context is a violation.

Document every point where AI-generated content enters a regulated workflow. This includes medication protocol generation, SOP creation, formulary management, and any clinical decision support output that feeds into a care decision without a defined clinician review step. If you cannot name that checkpoint today, you do not have governance — you have deployment.

Establish a formal human review and approval process for AI outputs in those workflows. “We review it” is not a governance policy. The FDA will ask for documented evidence of who reviewed what, when, and according to what standard. Build the paper trail now, before an inspector asks for it.

Audit your AI vendor contracts for liability transfer. Most SaaS agreements explicitly transfer liability to the health system for how the tool is used. The FDA is not interested in your vendor’s terms of service. If a tool generates a non-compliant output and a pharmacist does not catch it, the health system owns the violation.

TheraIntel Perspective: This Signal Is for Everyone

The Purolea warning letter will be cited for years as the inflection point when AI in regulated healthcare moved from “use at your discretion” to “govern or get cited.” But here is what most analysis of this case misses.

Purolea was a small manufacturer with visible sanitation failures — insects in the facility, no separation between manufacturing and the outdoor dock environment. The other cGMP violations in that letter make this look like an operational disaster. It is tempting to read the AI citation as incidental to a broader basket case.

That reading is wrong.

The FDA named AI misuse as its own citation category. That does not happen for a one-off. It happens when a regulatory body signals to an entire industry that this class of failure now has a home in enforcement doctrine. The Purolea facility was just first. The signal is for everyone.

Health systems that have deployed AI in pharmacy workflows without governance documentation are not in the same situation as Purolea. They have functioning operations, trained staff, and far more compliance infrastructure. But they are operating under the same regulatory framework — and they are making the same fundamental error: treating AI as the accountable party.

AI doesn’t hold a pharmacy license. It doesn’t have a DEA number. It doesn’t sign off on medications. The pharmacist does. Every AI output in a clinical workflow requires a pharmacist to own it — not passively, but with documented review.

The organizations that will be ready for the next enforcement wave aren’t the ones with the most AI. They’re the ones with the clearest paper trail of human accountability. That is a governance problem, not a technology problem — and it is solvable before the next inspection cycle.

Three Actions Before Your Next Inspection Cycle

Map every AI touchpoint in your regulated workflows this quarter. Medication protocols, SOP drafts, formulary documentation, clinical decision support outputs — every one needs a named human reviewer and a documented approval step. Start with your highest-volume workflows and work down. If the mapping exercise reveals checkpoints that don’t exist, those are your immediate build priorities.

Build the documentation infrastructure, not just the policy. A policy that says “all AI outputs must be reviewed by a pharmacist” is a starting point. What the FDA asks for is evidence: reviewer name, review date, the standard applied, and the approval on record. Design the documentation workflow now so that evidence exists when the inspection arrives.

Conduct an AI vendor contract audit before Q2 closes. Pull every active AI vendor agreement. Identify where liability for tool outputs sits. Flag any agreement that places compliance ownership on the vendor rather than your organization — those clauses are almost certainly unenforceable in a regulatory context, and you need to know that before a violation forces the issue.

“AI doesn’t hold a pharmacy license. It doesn’t have a DEA number. It doesn’t sign off on medications. The pharmacist does — and every AI output in a regulated workflow requires a pharmacist to own it with documented review. That is not a technology requirement. It is a professional accountability requirement.”