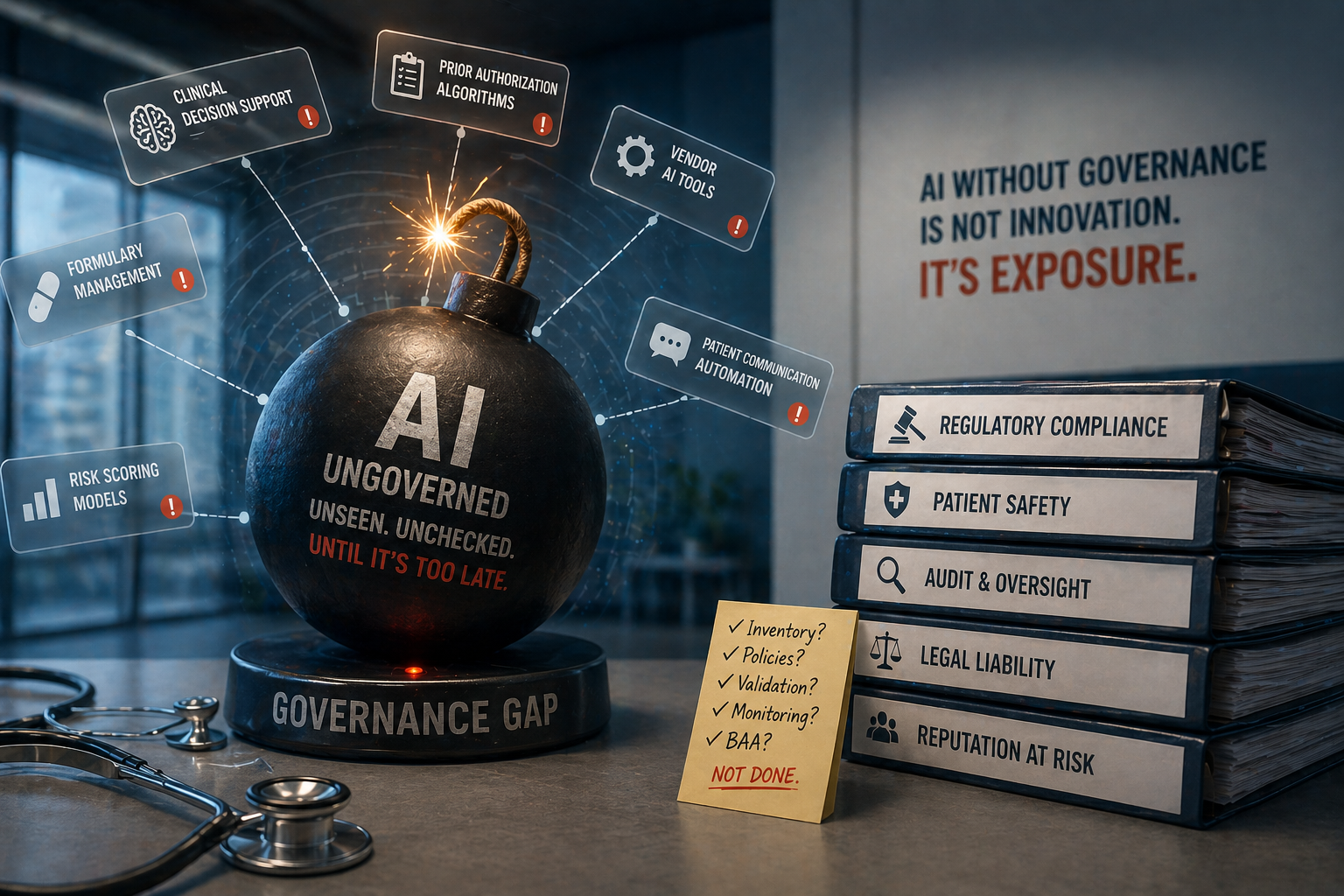

Three in four health systems have deployed AI. Only 18% have a formal policy governing how that AI gets used [Alignmt.ai, 2026]. That’s not a technology maturity problem. It’s an organizational liability — and it’s already triggered.

The Problem

Start with the number most governance teams don’t want to say out loud: 70% of health systems have an AI governance committee. But only 30% maintain an enterprise-wide AI inventory [Kiteworks, 2026]. Committees with charters, executive sponsors, and quarterly meetings — governing AI they cannot see.

This gap is more specific than it first appears. Over half of health systems have no documented process for detecting when vendors silently embed AI into existing products [Alignmt.ai, 2026]. Your EHR vendor adds an AI summarization feature to your discharge workflow. Your prior authorization vendor upgrades their decision engine. Your pharmacy benefits manager starts running ML-based flagging on formulary exceptions.

Your governance committee doesn’t know. Your compliance team doesn’t know. Your quality leadership doesn’t know. But your organization owns every adverse outcome those tools produce.

In pharmacy specifically, this creates a compounding risk. Clinical decision support tools, prior authorization engines, formulary management systems, and medication reconciliation platforms all carry AI components today — many silently updated by vendors on a continuous deployment cycle. The clinical pharmacist reviewing a high-risk medication alert doesn’t know whether that alert came from a validated algorithm or a model the vendor pushed last Tuesday. ASHP’s 2025 statement on AI in pharmacy flags validation as the critical gap: as of their 2023 Pharmacy Forecast, 73% of panelists predicted health systems would be required to validate AI tool safety, yet only 37% reported being prepared to perform that validation [ASHP, 2025].

This matters in April 2026 more than it did two years ago because regulators have started acting on it. The FDA issued its first cGMP warning letter citing AI governance failure in April 2026 — citing Purolea Cosmetic Lab for using AI-generated documentation in manufacturing operations without any quality unit review of the outputs [FDA, 2026]. The FDA’s finding was explicit: the failure wasn’t the AI’s. It was the organization’s for delegating compliance obligations to a system without oversight. Health systems operating unreviewed AI in clinical workflows are not immune to that standard.

Only 23% of health systems have Business Associate Agreements in place for their third-party AI solutions [Alignmt.ai, 2026]. That’s a present-day HIPAA exposure — not a potential one. BAA obligations exist at deployment. The 77% without them aren’t “at risk.” They’re already non-compliant.

The Insight

The organizations facing the hardest reckoning aren’t the ones that moved slowly on AI. They’re the ones that moved fast and treated governance as a retrospective task — something to catch up on after the tools were already running.

Most AI governance failures in healthcare don’t come from bad intent. They come from a committee that approved AI in the abstract and handed implementation to operations, who handed it to IT, who handed it to the vendor, who handed it to a product manager in another state. The chain of custody breaks somewhere between the governance resolution and the go-live date. When something goes wrong, no one can produce the documentation.

The Qventus 2026 CIO report makes the outcome data clear: only 4% of health systems have achieved scaled AI implementation with measurable outcomes [Qventus, 2026]. Forty-two percent are actively deploying across multiple use cases. That gap — 42% deploying, 4% measuring — is the governance gap expressed in ROI terms. You can’t measure outcomes for tools you haven’t inventoried.

The same report found 80% of health system leaders report difficulty measuring AI ROI, with 39% having no performance benchmark at all [Qventus, 2026]. That’s not a measurement methodology problem. It’s a governance infrastructure problem. You can’t measure what you haven’t tracked.

Here’s the take that governance-averse leaders won’t want to hear: every ungoverned AI tool running in your health system is a liability your risk management team hasn’t priced. The liability isn’t hypothetical — it’s latent. It will be triggered by a patient safety event, a payer audit, or a regulatory inspection. When it is, your response will be shaped entirely by what documentation exists at that moment. If your AI inventory is empty, your defense is empty.

“70% of health systems have an AI governance committee. Only 30% know what AI they’re actually running. You can’t defend what you can’t document — and the regulatory environment in 2026 is done waiting.”

Real-World Application

The health systems closing the governance gap aren’t rebuilding from scratch. They’re making three structural changes that take weeks, not years.

| Governance Component | What’s Missing in Most Systems | What Closing the Gap Looks Like |

|---|---|---|

| AI Inventory | No enterprise-wide list of active AI tools | Quarterly vendor contract audit; AI feature disclosure required at onboarding |

| BAA Coverage | 77% of third-party AI tools lack signed BAAs | Retroactive BAA review within 90 days; new vendor template requires AI BAA at contract signing |

| Vendor Change Notification | No process to detect silently-embedded AI updates | Contractual requirement: vendors notify governance team within 30 days of any AI feature deployment |

| Outcome Accountability | No defined ROI benchmarks for deployed tools | Each AI tool has a named operational owner responsible for quarterly outcome reporting |

The pattern across systems where governance actually works is consistent: one person owns the AI inventory and is accountable for its accuracy. Not a committee. Committees set policy. One person is accountable for the list. Health systems conflating those two functions are why governance documentation looks strong in board presentations and collapses under a regulatory audit.

A governance framework published by the Catholic Health Association in late 2025 — adopted by several large integrated delivery networks — establishes an AI Steward role with direct reporting to the CISO and CMO, responsible for inventory completeness, BAA status, and quarterly outcome review [CHA, 2025]. The reason this model works isn’t that it’s complex. It’s that naming one person changes the accountability dynamic entirely.

Executive Takeaway

Three things a health system leader can do this week:

-

Run the inventory audit. Pull every vendor contract from the last 36 months and flag any product with AI capabilities — whether or not those features are currently active. Flag every AI tool without a signed BAA. This isn’t an IT task. It’s a C-suite accountability item your legal and compliance teams need before your next payer audit or CMS survey.

-

Update your vendor contract template today. Any new AI vendor agreement must include an AI feature disclosure clause: the vendor is contractually required to notify your governance team within 30 days of deploying any new AI capability into your environment. If your current vendor won’t sign that, that’s a decision point — not an inconvenience.

-

Separate governance committee from governance accountability. Designate one operational owner for your enterprise AI inventory. That person’s performance review must include the inventory’s accuracy and completeness. Governance by committee diffuses accountability into inaction. One named owner creates it.

The 4% of health systems with measurable AI outcomes didn’t get there by moving slower. They built the infrastructure to know what they were running before they ran it. In 2026, that’s the competitive advantage most systems are still underestimating.